PREV ARTICLE

NEXT ARTICLE

FULL ISSUE

PREV FULL ISSUE

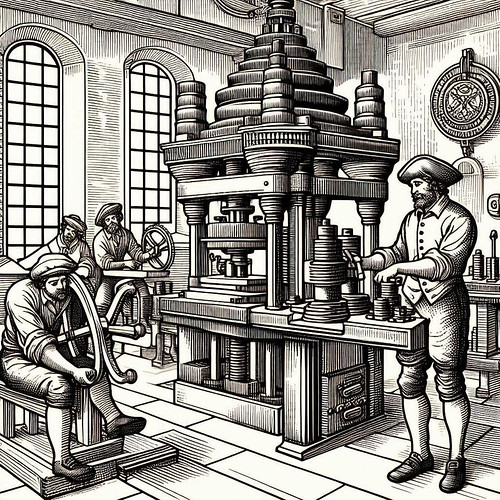

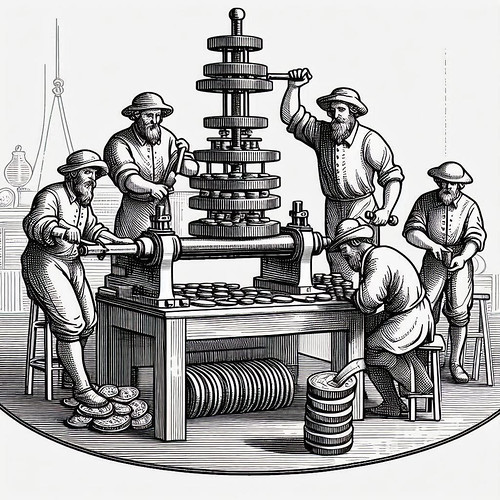

ON AI-GENERATED COIN IMAGESGary Beals has been experimenting with AI-generated images and shared these thoughts. Thank you. -Editor I have had fun creating high quality images that might end up in a coin book. Working with MS Copilot is like hanging out with a brilliant friend who is also mentally unstable and over-confident in his abilities. All of the artwork has excellent, thoughtful details and elements yet can be totally off-base for the purpose being attempted by the person submitting instructions. Oddly, I asked for a Muera Huerta Mexico revolution silver coin and got the real deal. Someone must have put one it Copilot's memory. But I could not find out how to upload material for some future graphics use. There is a huge technical base for how information and images are gathered and presented. I am not a scientist. I am a guy who ran an advertising and publishing company in San Diego for more than 30 years. When I see a piece of original artwork I usually think of the hours involved in creating it and the wages an illustrator deserves for creating it. From that standpoint AI images can be breathtaking. Especially when they can be delivered in seconds. Somehow AI can see hundreds of thousands of images it uses to compose graphic art elements upon request. But as soon as it creates an image it is blind to it. It cannot see what it generated. Therefore, the additions or corrections we would expect to do with a human are not possible with AI. Or at least with MS Copilot as I was using it. You cannot refer to an image just created and ask the robot to change a background color or add an element or reduce a portion of the image or change the style of the work. Your AI robot cannot see its own work. The good news is that humans skilled in digital graphic design work can make corrections and changes to the artwork. If some flaky character wanted to crank out a book on coin collecting AI could save him lots of time and money by producing unique, original artwork. Accurate numismatically? Not bloody likely. If he wanted AI to create the cover of his book that might work. Why it loses its mind with words I have no idea. Nice bust. This castle was a half hour drive from my home in Spain. I asked for a drawing of it and got — Doing shipwreck coins story, it can provide local color.... I asked for a bald eagle in line art and was very pleased -- Fly press coin minting it knows nothing but it still created machines -- Sometimes the device goes nuts and you discover a computer can also lose its mind. The various attempts to create artwork of fly press operations was a failure. What became clear in this process is that with AI it would be easy to create confusing fake images with text implying that a mechanical operation could be carried out in a certain way when in reality the art is total nonsense. AI gave me this in research phase -- but could not use this image to create anything similar. I would gladly have paid $500 or so for this original line drawing a few years ago. This was free. © GB too! This is a good version of the Hotel Del Coronado here in San Diego -- I asked for the dolphins. It can go nuts with stylized graphics as might become a medal. A coin book could have artwork in no time flat. I asked for teens with coin albums. -- which the robot knows nothing about. Someone loaded this $1000 coin into Copilot. I asked for a carpenter in 1880. Old boy is overdressed. I asked for a California Mission with farming, It is not a specific church but great illustration. I asked for useless cents overflowing a vault. Note it has words problem. Have fun! AI capabilities are improving rapidly, and there are other image generators out there, including DALL-E, Midjourney, Stable Diffusion, and more, each with its own strengths and weaknesses. I understand Ideogram boosts the accuracy of text, and Stable Diffusion allows for more customization and control of your images. It's an exciting but frustrating time, requiring a lot of experimentation. And not all of these are free, so some investment of money is required in addition to time. But definitely fun. Thanks, Gary! And right on time, the AI industry has begun rolling out new versions with breakthrough improvements in image generation. The state-of-the-art is advancing rapidly. Here's a post published this morning by Professor Ethan Mollick of Wharton, one of the most influential people in AI. See the complete article online if you're interested in experimenting with these tools. -Editor Over the past two weeks, first Google and then OpenAI rolled out their multimodal image generation abilities. This is a big deal. Previously, when a Large Language Model AI generated an image, it wasn't really the LLM doing the work. Instead, the AI would send a text prompt to a separate image generation tool and show you what came back. The AI creates the text prompt, but another, less intelligent system creates the image. For example, if prompted “show me a room with no elephants in it, make sure to annotate the image to show me why there are no possible elephants” the less intelligent image generation system would see the word elephant multiple times and add them to the picture. As a result, AI image generations were pretty mediocre with distorted text and random elements; sometimes fun, but rarely useful. Multimodal image generation, on the other hand, lets the AI directly control the image being made. While there are lots of variations (and the companies keep some of their methods secret), in multimodal image generation, images are created in the same way that LLMs create text, a token at a time. Instead of adding individual words to make a sentence, the AI creates the image in individual pieces, one after another, that are assembled into a whole picture. This lets the AI create much more impressive, exacting images. Not only are you guaranteed no elephants, but the final results of this image creation process reflect the intelligence of the LLM's “thinking”, as well as clear writing and precise control. One section particularly resonated with me - "Is it okay to reproduce the hard-won style of other artists using AI? Who owns the resulting art?" -Editor If you have been following the online discussion over these new image generators, you probably noticed that I haven't demonstrated their most viral use - doing style transfer, where people ask AI to convert photos into images that look like they were made for the Simpsons or by Studio Ghibli. These sorts of application highlight all of the complexities of using AI for art: Is it okay to reproduce the hard-won style of other artists using AI? Who owns the resulting art? Who profits from it? Which artists are in the training data for AI, and what is the legal and ethical status of using copyrighted work for training? These were important questions before multimodal AI, but now developing answers to them is increasingly urgent. Yet it is clear that what has happened to text will happen to images, and eventually video and 3D environments. These multimodal systems are reshaping the landscape of visual creation, offering powerful new capabilities while raising legitimate questions about creative ownership and authenticity. The line between human and AI creation will continue to blur, pushing us to reconsider what constitutes originality in a world where anyone can generate sophisticated visuals with a few prompts. Some creative professions will adapt; others may be unchanged, and still others may transform entirely. As with any significant technological shift, we'll need well-considered frameworks to navigate the complex terrain ahead. The question isn't whether these tools will change visual media, but whether we'll be thoughtful enough to shape that change intentionally. Style transfer was precisely the subject of the article my money artist friend J.S.G. Boggs explored in his April 1993 Chicago-Kent Law Review article "Who Owns This?" -Editor Boggs wrote: Nothing gets me quite so angry as seeing a visual artist whose style is copied and, in effect, stolen. And it bewilders me that people can get away with it. It happens most often with artists who gain a certain degree of recognition, gained in large part by their style. All the pop artists suffered this, and it is an entirely different situation than using a small part of some other artist's work. In fact, this is a very big problem...

To read the complete article, see:

To read earlier E-Sylum articles, see:

Wayne Homren, Editor The Numismatic Bibliomania Society is a non-profit organization promoting numismatic literature. See our web site at coinbooks.org. To submit items for publication in The E-Sylum, write to the Editor at this address: whomren@gmail.com To subscribe go to: Subscribe All Rights Reserved. NBS Home Page Contact the NBS webmaster

|